XML Sitemap Assisted Redirects: Advanced White Hat SEO

One of the most critical times for a site’s rankings occur when there is a massive shift in URL structure across the site. Unfortunately, this is a common prescription for sites with unruly URLs with multiple parameters. Creating pretty, canonical URLs is easy enough, as is mapping old URLs to new with 301 redirects, but preventing duplicate content issues can be problematic.

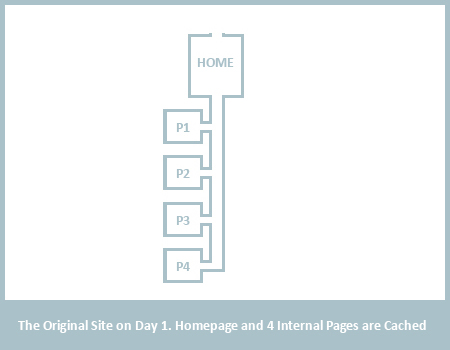

Each page on the web represents a destination that can be reached by links. Theoretically, without XML Sitemaps (or similar forms of direct page submission), there would be no way for Googlebot to find pages that are not connected by links. In our first example image, this site has a homepage and 4 subpages, connected by links, all of which have been cached by Google.

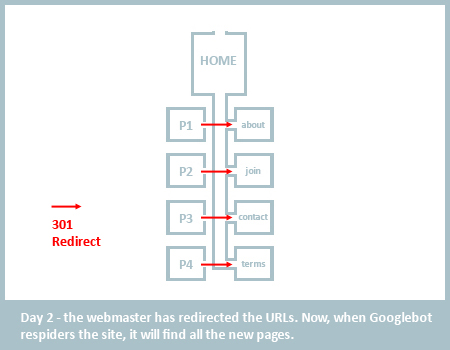

Let’s assume that these 4 subpages have terrible URLs so the webmaster decides to rewrite the URLs to /about, /contact, /join and /terms. In the typical methodology, the webmaster 301 redirects all the old URLs to the nice URLs. Googlebot respiders the site and finds all the new pretty URLs. But herein lies the problem, can you spot it?

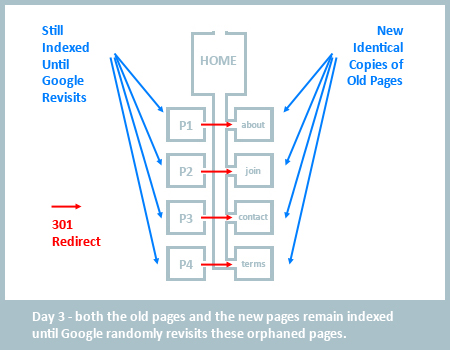

When rewriting the site and the URLs, the webmaster has effectively orphaned all former subpages on the site. Aside from pre-existing external backlinks to these pages, there is no way for Googlebot to follow a natural link course to reach the old pages, find the 301 redirect, and correctly remove them from the index. More importantly, unlike normal spidering where Google find’s one page and uses it to find others on your site, Googlebot is redirected directly into your new site hierarchy, making it near to impossible for Google to quickly correct the old URL structure. This is why sites that change URL structure sitewide often see temporary increases in the number of indexed pages. Because the content has not changed, we have a potential duplicate content issue. Unless Google has queued up these old pages to be revisited, it can be days if not weeks before Google revisits the old URLs and finds the 301 redirects, subsequently removing the duplicate content from the index.

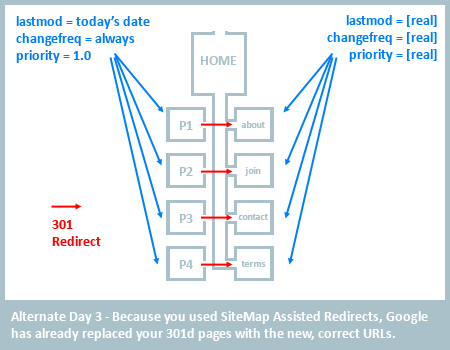

This is where Sitemap Assisted Redirects become effective. We can use XML sitemaps to coax the bots to revisit the old pages more rapidly, thus greatly lessening the likelihood of duplicate content.

The first step is to create a sitemap with both the old and new URLs. The old URLs should have their lastmod attribute set to today’s date, the changefreq should be set to always, and priority set to 1.0. This is basically giving each of your old pages a powerbar and a couple shots of espresso. Google now believes these pages are your most important and have been recently updated. Your new pages should be listed accurately – changefreq should be how often you update the content on the page, priority should be set appropriately, and lastmod should be set to the last day you updated the content (not when you simply changed the URL).

This will coax Googlebot to quickly spider the old pages. When Google visits the old pages, they will find the 301s and remove them from the index, replacing them with the corrected new URLs. As soon as this has occured, remove the old URLs from your sitemap and you are good to go.

No tags for this post.

This is a great tip and I’ve tried into a client website. The speed Google is redirecting the old to new vertion is really good.

Thanks for this tip!

Been trying out this tactic on a few sites whose URLs have been changed… however I just noticed that Google Webmaster Tools now flags up redirected URLs as errors and refuses to accept the sitemap until these are fixed…have you found this to be the case?

I guess an alternative is to place a ‘legacy’ HTML site map on the site with the old URLs so that Google follows them and updates the redirects?

Although to be honest I’ve found that Google is sporadic at best at updating these URLs…

the topic is like in sitemap is /index.php/topic,8.0.html , how to turn this into /topicname.html

how to do this please help me out this

Thank you for this blog post. This is something I will now recommend and carry out in my own work. I have just taken on a new client for whom I will carry this out with.

I dont know how to redirect my error sitemap to the correct one. Im using typo 3 CMS, please help

Great article

I have been recently dealing with a situation to this.

Webmaster tools is reporting the following:

– URLs not followed

When we tested a sample of URLs from your Sitemap, we found that some URLs redirect to other locations. We recommend that your Sitemap contain URLs that point to the final destination (the redirect target) instead of redirecting to another URL.

Your recommendation is to add both urls (old and new to the sitemap of the affected web site? I don’t see how the new urls correlate to the old urls in the sitemap other then the fact they are being 301 redirected.

@jeff @jaamit – Great! Now you know that Google has become aware of all the redirected URLs! I know it may be an error, but you have just forced Google to become aware that all of those old URLs have changed.